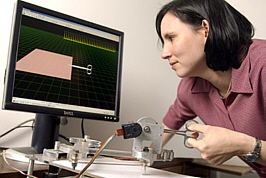

A press release from Johns Hopkins University describes work being done to incorporate haptic (i.e., touch-related) feedback to surgical systems. I first heard about such technologies when touring the University of North Carolina’s computer science department a year or so ago.

In the case of the Johns Hopkins technology, doctors using robotic surgical technology receive an additional layer of feedback, attempting to restore their sense of touch during robotic procedures. The Carolinans, on the other hand, are using a similar feedback mechanism to manipulate things at the nano-scale, making such objects feel “sticky” or repulsive (in the sense of electrical or magnetic repulsion) via feedback from a mechanical interface.

This is kewl.

While not necessarily “visualizing” data, it is interaction that offers a remarkable way of interacting with datasets. It would be great to see technology like this in a science center—can you imagine stepping up to a display and being able to feel the electrical forces in a 3-D molecule? Or maybe you could touch an image from an electron microscope? Or perhaps you would be exposed to gravitational or pressure differences on a variety of planets? Sounds like (insightful) fun to me.